The rise of the outer planner

Why multi-agent orchestration is becoming the new LLM stack

Most early agent systems assumed a simple architecture: one planner, one reasoning loop, a sequence of tool calls, all resulting in the successful completion of one task.

There’s been a big shift from single agents to orchestrated agent systems because single-agent systems hit limits quickly:

Context collapse: one agent trying to do everything makes for noisy context windows

Tool overload: Too many tools degrade routing accuracy

Long-horizon drift: long-running tasks drift off course

Stuck in reflection loops

Single agents are inherently sequential

Instead of asking one agent to do everything, the latest agentic workflows have shifted the conversation from “what can one agent do?” to “how can many agents work together?” However, multi-agent system frameworks have often lacked a principled mechanism for ensuring the quality and completeness of the final output.

I recently read a paper from ICLR 2026 on “Verified Multi-Agent Orchestration” which proposes a structured orchestration layer for multi-agent systems.

Orchestration-level verification

The main point of the paper is that reliability in multi-agent systems cannot be left to individual agent reasoning. Instead, it must be enforced by the orchestrator.

At a high level, the architecture separates global task coordination from specialized agent execution. The orchestrator acts as a global planner that decomposes and assigns tasks, manages dependencies, and tracks execution across worker agents. Meanwhile, the worker agents perform their own local planning such as deciding which tool to call, reasoning through steps and adapting to observations.

The authors argue that current systems fail because they treat agent collaboration as a linear or conversational process without a supervisor that understands the global state of the goal. VMAO introduces a structured, iterative loop that treats the collective output of multiple specialized agents as a draft that must be formally verified against the original query before a final answer is synthesized.

From Conversation to DAGs

To understand why this matters, we have to look at how the field has evolved:

Single agent: Chain-of-Thought and ReAct patterns focused on a single model’s internal reasoning.

Social multi-agent: Frameworks like AutoGen introduced debate or role-play, but these were often stochastic and prone to drift - where agents agree with each other but move away from the actual user intent.

Multi-Agent orchestration: This paper moves away from conversational metaphors and toward Directed Acyclic Graphs (DAGs).

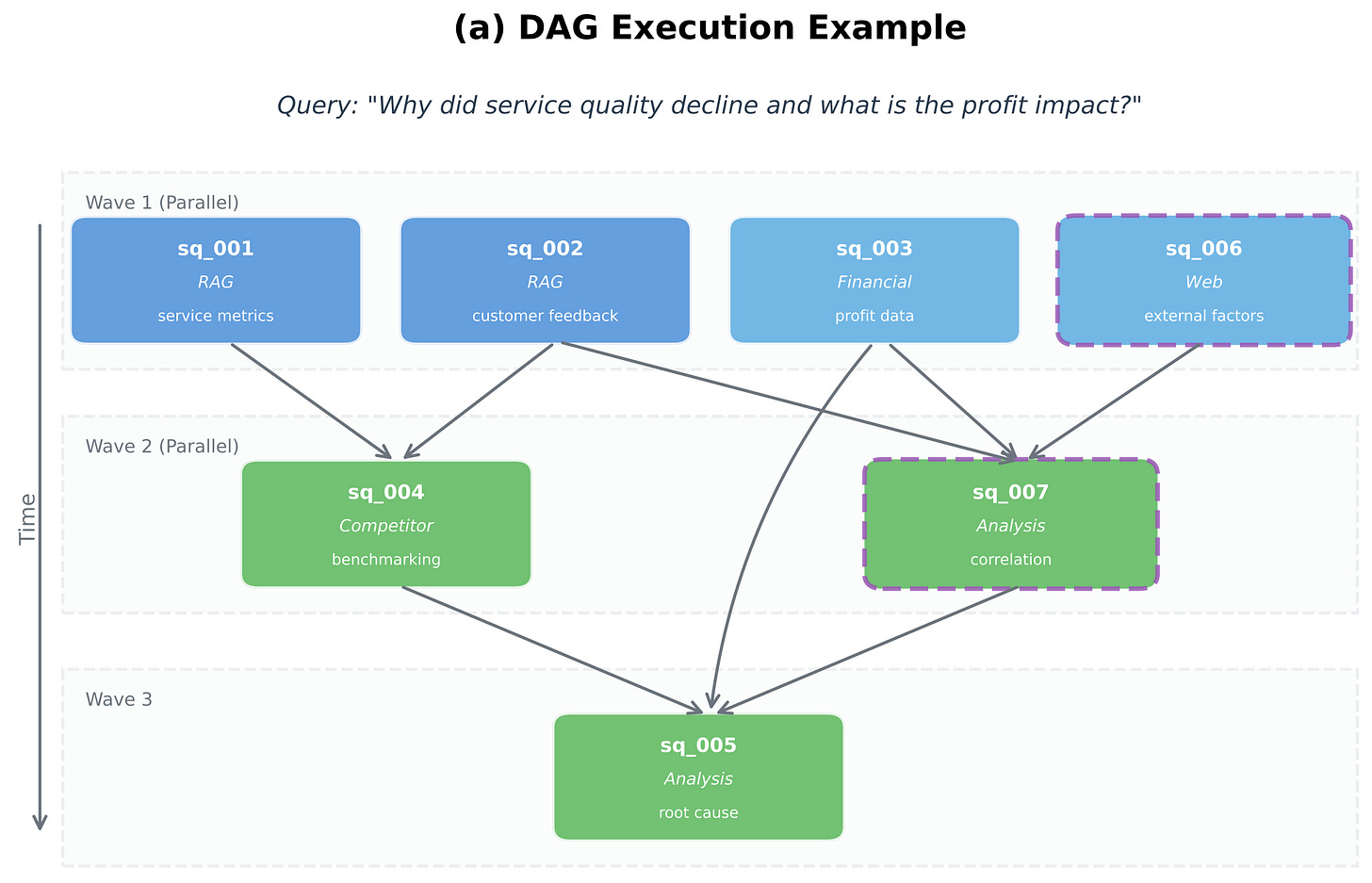

By representing task decomposition as a DAG, the system can handle complex dependencies and parallelize execution while maintaining a deterministic path for context propagation. This starts looking much closer to distributed systems than prompt engineering.

How VMAO Works: The Five-Phase Loop

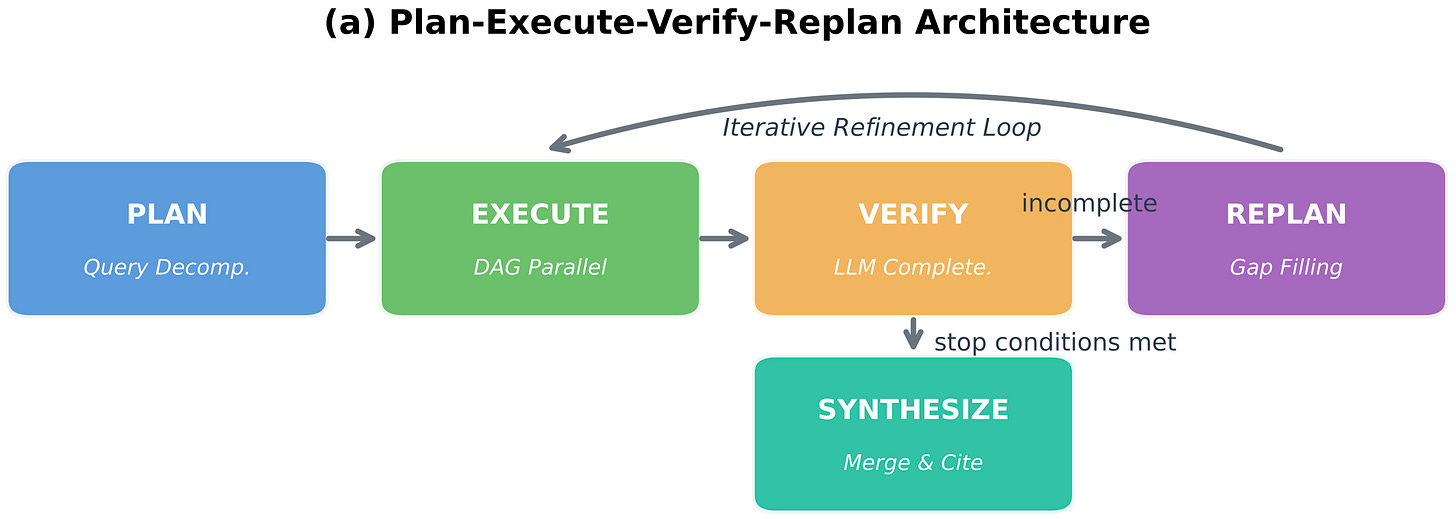

The system architecture proposed by the VMAO paper combines several ideas that have independently emerged across the industry over the last year. The framework operates on a “Plan-Execute-Verify-Replan” cycle. Instead of solving a task end-to-end inside a single reasoning loop, the orchestrator decomposes work into smaller units.

Plan: The orchestrator decomposes a high-level query into sub-questions, assigning them to specialized agents (e.g., a Financial Agent vs. a Legal Agent) and mapping their dependencies.

Execute: Agents process sub-questions in parallel where the DAG allows.

Verify: An LLM-based Verifier (acting as a separate orchestration signal) evaluates the combined results for completeness and source quality.

Replan: If the Verifier identifies gaps (e.g., “The competitor’s 2025 revenue was missing from the analysis”), the system generates new sub-questions to fill those specific holes.

Synthesize: Once stop conditions are met, a final Synthesizer compiles the verified data into a coherent response with proper attribution.

The paper treats evaluation as a runtime control mechanism rather than an offline benchmarking step. This is a major departure from earlier generations of agent systems. Historically, evaluations are done offline through benchmark suites and often disconnected from the actual execution. This paper proposes evaluation as a part of the orchestration itself - I think this is the most important architectural change proposed in the paper. In other words, the future agent stack looks increasingly like distributed systems engineering than traditional machine learning.

Where the Paper Falls Short

While VMAO is a significant step toward production-grade multi-agent systems, it has two primary blind spots:

Ironically, despite emphasizing evaluation through orchestration, the paper doesn’t really solve the verifier paradox. The system relies on an LLM-based Verifier to check other LLMs. This creates a recursive reliability problem. If the Verifier itself misses a nuance or hallucinates a “completeness” check, the entire loop fails. The paper doesn’t deeply explore formal methods to verify the Verifier.

Secondly, cost and latency are still major unresolved issues. The iterative replanning loop, while thorough, is computationally expensive. In the authors’ market research benchmarks, completeness improved by 35%, but at the cost of significantly higher token usage and wall-clock time compared to single-pass systems. It’s a “Deep Research” mode that isn’t yet optimized for real-time applications.

Final Thoughts

I think this paper captures an important maturation point in the field. We are moving away from vibes-based agent collaboration. The most important insight is probably that orchestration isn’t just glue-code around the model, but represents the reliable coordination of many reasoning systems together. A key takeaway for me is - don’t ask the agents if they’re done, ask a separate verifier if the plan has been satisfied.

References

Verified Multi-Agent Orchestration: A Plan-Execute-Verify-Replan Framework for Complex Query Resolution. (2026). arXiv:2603.11445.